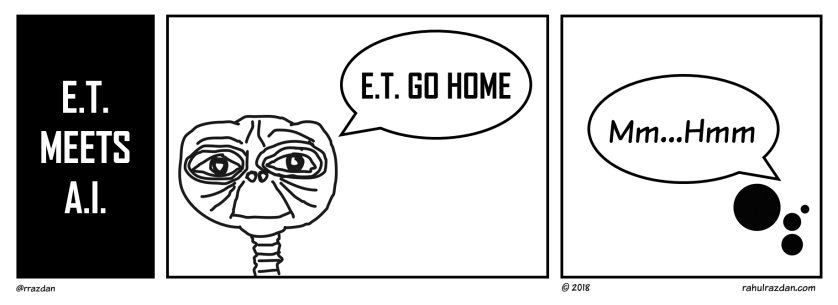

Let’s admit it. Everybody seems to have had a reaction to the ‘Mm..hmm’ moment during the Google I/O 2018 event last month. The cheeky ‘mm…hmm’ semi-syllabic interjection (which was part of the Google Assistant’s purported phone call to fix an appointment with a salon) evoked titters from the crowd at the event; the kind you mostly hear at stand-up gigs.

This instant crowd reaction reminded some, of the January 2007 Macworld keynote where Steve Jobs had introduced the iPhone for the first time; and the awestruck crowd then had collectively GASPED when they saw the ‘pinch-zoom’ feature.

And therein lies the difference.

The pinch-zoom experience was a classic WYSIWYG promise which Apple completely delivered on.

The Google Duplex demonstration on the other hand, with due respect to Google’s outstanding research strength, has evoked a barrage of questions from people coming from a variety of perspectives.

Some people have questioned the authenticity of the call itself; some have raised moral issues around non-disclosure of the caller being a bot; some have pointed out how this would lead to clogging of phone lines at establishments, and therefore a non-starter in its practical application on ground; some have seen humour in bots on both sides (user and the business) conversing with each other; while some others are seeing doomsday with such interactions taking place between non-humans.

But all these arguments are taking a big leap of faith — that the technology that Google demonstrated, would deliver at multiple points of the value-chain right up to the last mile.

However, there are multiple reference points which suggest that WYG is going to be far from WYS.

COME ON, EVEN AUTO-CORRECT ISN’T THERE YET!

From the days of desktop word-processing, through T9 predictive text on numeric mobile keypads, to QWERTY touchscreen tapping, to Swype based keyboards, to speech-to-text dictation on contemporary OS keyboards – none of these popular input methods have delivered accuracy anywhere close to 100%. The users continue having to double-check for factual, and/or contextual accuracy, and worst of all, for grammar. (Yes, auto-corrects falling prey to the ‘your/you’re’ or ‘there/their’ homonym misuse is still a reality!)

And this is linear mono-directional word and sentence creation we’re talking about. Iterative interactions add to the complexity by providing additional context as well as noise with every exchange. Now imagine a bot that negotiates & fixes appointments for you, committing your time and other resources, behind your back. Mm..hmm.

HOW DOES ALADDIN BRIEF HIS GENIE?

Yes, it is commendable that while the whole world has been busy working on chat-bot solutions to ease the communication overload on the businesses side — Google put the ‘digital assistant’ on the side of the user, and that too using a medium that’s still quite familiar to most people – the voice call.

But what remains unexplored is — how do users, in the first place, communicate the instructions to their digital assistants to fix that appointment. And these instructions would not be restricted to date and time input alone. You’ve got to provide ranges and boundaries on time slots, along with the order of preference, to equip your digital assistants for their mm…hmm embellished negotiations.

And that is not an easy task at all.

Calendars and scheduling applications have been in existence for decades, and yet there aren’t any killer apps in that category. There is a fundamental UX fail-zone that calendars, and schedulers never seem to get out of.

Yet, there are other services which have solved this in the context of their respective domains, significantly reducing friction on the users’ side. Interestingly, quite a few of them have gone on to become multi-billion-dollar businesses; aided in no small measure by how they handled the UX. Think cab-hailing, travels bookings, and restaurant table bookings etc.

Till the time Google (or any other service) doesn’t solve this first stage problem itself, it may unintentionally be nurturing the medium-term failure of this project. This is not to say that Google won’t be able solve it. In fact, if they do, that by itself would be a breakthrough worthy of its own mm…hmm equivalents.

BOTS ‘TALKING TO’ BOTS

A lot of people have had a field day conjuring hilarious satires and farcical scenarios where the users’ Google Assistants’ phone calls are received by the businesses’ equivalent voice bots. Some have pointed out that two computer systems talking to each other already have several ways of communicating: Through code, protocols, APIs, query languages etc. Getting them to make a ‘voice phone call’ to each other, doesn’t add any efficiencies to the interaction, except maybe get them to utter sweet nothings as well.

But the end result — getting computers to ‘talk’ to each other — isn’t the end objective. The end objective is helping users interact with establishments in the most efficient manner. Or is it?

Maybe, our A.I. scientists feel an even greater pressure to pay obeisance to their obsession for humanization of computers. But why the obsession?

That obsession is rooted in anthropomorphism: Giving human-like qualities to non-human entities such animals, machines, cars etc.

For nearly 100 years now, the animation industry has struck gold with anthropomorphism. Much before that, another industry had extensively used anthropomorphism to create persuasive stories & characters – religion!

With unprecedented computing resources at its disposal today, the A.I. industry sees itself at the same inflexion point that the animation industry was with Toy Story. And therefore the obsession.

BLIND-SIDED BY OBSESSION

As some have pointed out, if you asked the A.I. inventors of today to make a kitchen appliance that would clean utensils, they would deep-dive into the global collective knowledge pool; summon all the computing resources they have; spend an eternity making a waterproof humanoid bot that would stand by the kitchen sink; and try to work exactly like humans to wash, lather, rinse and dry up the utensils — while all you needed was a dish-washer!

Or, if today’s inventors went back to Steve Jobs’ demo in 2007, they would rather fancy making a realistic robotic hand that could pinch-zoom!

The argument has been made several times over, that when the motor vehicle industry was at its first inflexion point, the inventors of the day, fortunately, were not attempting to mimic horses’ morphology — but making a paradigm jump with engines and pistons.

That’s where the inventors of today need to draw their inspiration from: First principles. Not human mimicry!